Machine learning algorithms are often breathlessly described as ‘game changers’ for healthcare. In April 2019, the NHS announced a £250m investment in artificial intelligence (AI). It hopes AI can reduce pressure on the health service by improving cancer screening and estimate future needs for beds, surgery and drugs; and identify which patients could be more easily treated in the community.

“We are on the cusp of a huge health tech revolution that could transform patient experience by making the NHS a truly predictive, preventive and personalised health and care service,” said the UK’s Health Secretary Matt Hancock during the announcement.

AI is undoubtedly exciting and has already proved successful in some hospitals in the UK where algorithms successfully predict cancer survival rates and have even saved trusts money by cutting down the number of missed appointments.

Multiple studies have suggested that machine learning outperforms many of the traditional statistical models used in healthcare. But University of Manchester research, published in the BMJ, suggests that there’s still a long way to go before AI can replace the standard models used to predict a patient’s risk of becoming ill.

It’s not the first time that algorithms have come under fire in recent months. In the UK, AI models used by Ofqual resulted in excessive lowering of A level grades in August – a major blow for many teenagers across the country.

“There’s a lot of interest in artificial intelligence and machine learning for healthcare,” says study co-author Professor Tjeerd Pieter van Staa from the University of Manchester and the Alan Turing Institute. “But we wanted to see how sensible an investment it really is.”

How well do you really know your competitors?

Access the most comprehensive Company Profiles on the market, powered by GlobalData. Save hours of research. Gain competitive edge.

Thank you!

Your download email will arrive shortly

Not ready to buy yet? Download a free sample

We are confident about the unique quality of our Company Profiles. However, we want you to make the most beneficial decision for your business, so we offer a free sample that you can download by submitting the below form

By GlobalDataPredicting who needs statins

GPs currently use a standard statistical tool called QRISK to determine if their patients have a 10% or greater risk of developing cardiovascular disease within ten years. If the tool suggests that they are at risk, the patient is prescribed statins. These medicines lower the level of ‘bad’ cholesterol in the blood, reducing the risk of heart disease developing.

QRISK was developed using a large data set of anonymised patient records and a statistical model called Cox. “It follows patients over time and determines whether the patient is likely to experience a heart attack or not,” explains Van Staa.

However, while research has found QRISK to be an accurate predictor at the population level, other studies suggest it has considerable uncertainty when it comes to an individual’s risk. Some experts think a model that uses machine learning could provide a more accurate result for GPs trying to decide whether a patient should be prescribed statins. Van Staa and colleagues wanted to see if this was true.

They compared 12 types of popular machine learning models to seven standard statistical predictors (like QRISK) of a person suffering a heart attack or a stroke. The dataset included 3.6 million patients registered at 391 GP practices in England between 1998 and 2018. Hospital admissions and mortality records for these patients were used to test each model’s performance against true events.

When the researchers crunched the numbers, they found the AI models and the statistical tools gave very different results to each other, particularly for patients who were most likely to experience a cardiovascular event.

For instance, QRISK identified 223,815 patients with a cardiovascular disease risk above 7.5%. But 57.8% of these patients would be reclassified below this figure when using another type of model. They would therefore not be prescribed statins if a doctor was going by one of these predictors.

“Initially we were very surprised about our results. But once we dug into it, it became apparent that those models don’t deal with a particular bias, which you often have in a setting where you’re studying long term effects,” reveals Van Staa.

Bias in AI

The researchers soon realised the difference between the models’ prediction scores could be explained by a concept that statisticians call ‘censoring’. This occurs when there is incomplete information about a person or measurement. Many of the AI models did not account for the fact that some patients change GP practices, which would skew the figures.

“If a patient drops out of a surgery at day one, the Cox model will stop following that patient,” says Van Starr. “But we found the machine learning model does not consider that.”

Instead, it would assume that the patient had not suffered a heart attack in ten years even though there’s no way of knowing. Similarly, even if a patient has only been registered at practice for a couple of months, the machine learning model will still treat it as 10 years’ worth of data. This would hence lead to a strong underestimation of clinical risk.

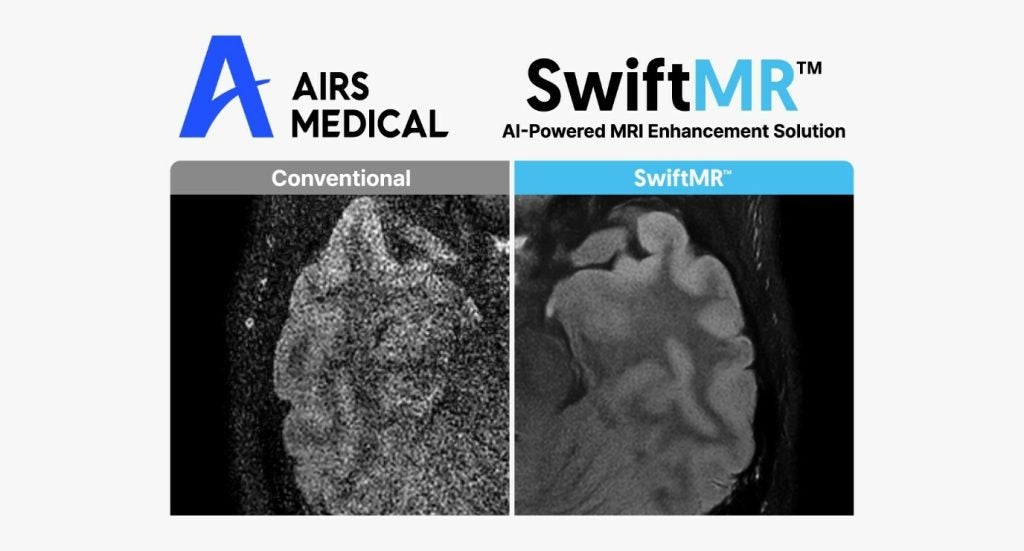

The findings suggest QRISK should not be replaced by another model to help GPs decide treatments for their patients just yet. And Van Staa believes a lot more work is required before AI technology that predicts cardiovascular risk can be safely used in a clinical setting. However, he thinks there are areas machine learning could be safely employed in healthcare such as imaging.

“What we hope is that people reflect and critically assess that new technology actually does what it’s supposed to do,” he says. “So, before you make a major investment, think hard about whether it’s actually going to be useful. Strip away the hype and be aware of the kind of challenges of implementing tools like this for risk prediction.”