Cognitive neuroscientists are increasingly using emerging artificial networks to enhance their understanding of the human brain, according to a presentation at the 25th annual meeting of the Cognitive Neuroscience Society (CNS).

The presentation was presented by MIT Computer Science & Artificial Intelligence Lab principal research scientist Aude Oliva. She said: “The fundamental questions cognitive neuroscientists and computer scientists seek to answer are similar. They have a complex system made of components – for one, it’s called neurons and for the other, it’s called units – and we are doing experiments to try to determine what those components calculate.”

Discover B2B Marketing That Performs

Combine business intelligence and editorial excellence to reach engaged professionals across 36 leading media platforms.

Oliva’s work suggests that neuroscientists are beginning to learn much about the role of contextual clues in human image recognition. By using ‘artificial neurons’, similar to lines of code, with neural network models, they can examine the various elements that determine how a human brain recognises a specific place or object.

In a recent study of more than 10 million images, Oliva and colleagues taught an artificial network to recognise 350 different places, such as a kitchen, bedroom, park and living room. They expected the network to learn objects such as a bed associated with a bedroom but the network also learnt to recognise people and animals.

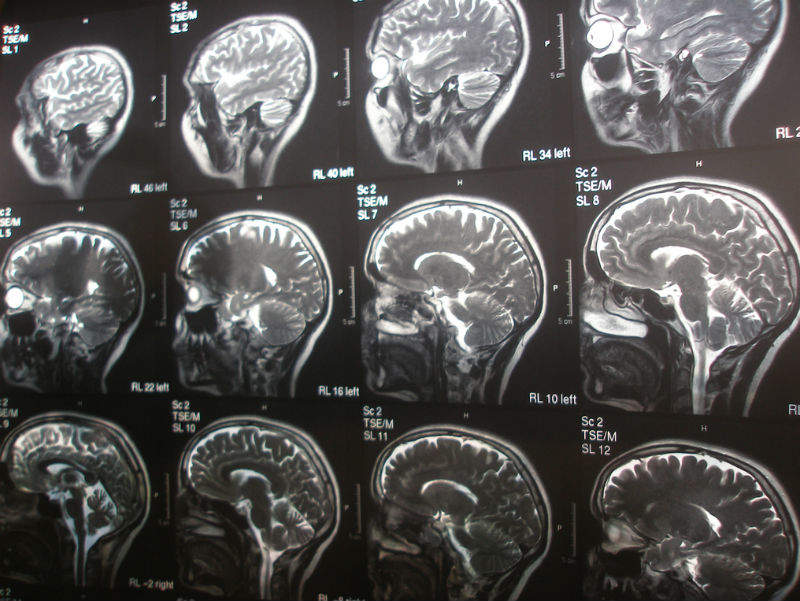

Machine intelligence programs learn very quickly when given lots of data, which is what enables them to analyse contextual learning at such a fine level. While it is not possible to dissect human neurons at such a level, the computer model performing a similar task acts as a ‘mini brain’ that allows researchers to gain a vague idea of a how a real brain may function.

Chair of the symposium, Dr Nikolaus Kriegeskorte, believes that these models have helped neuroscientists understand how people can recognise the objects around them in the blink of an eye. He said: “This involves millions of signals emanating from the retina, that sweep through a sequence of layers of neurons, extracting semantic information, for example, that we’re looking at a street scene with several people and a dog.

“Current neural network models can perform this kind of task using only computations that biological neurons can perform. Moreover, these neural network models can predict to some extent how a neuron deep in the brain will respond to any image.”

Kriegeskorte acknowledges that artificial networks cannot yet replicate human visual abilities but believes that, by modelling the human brain, they are furthering understanding of both cognition and artificial intelligence.

Oliva added: “Human cognitive and computational neuroscience is a fast-growing area of research, and knowledge about how the human brain is able to see, hear, feel, think, remember, and predict is mandatory to develop better diagnostic tools, to repair the brain, and to make sure it develops well.”