Patients with paralysis-related speech loss could benefit from a new technology developed by University of California San Francisco (UCSF) researchers that turns brain signals for speech into written sentences.

Operating in real time, this technology is the first to extract intention to say specific words from brain activity rapidly enough to keep pace with natural conversation.

Discover B2B Marketing That Performs

Combine business intelligence and editorial excellence to reach engaged professionals across 36 leading media platforms.

The software is currently able to recognise only a series of sentences it has been trained to detect, but the research team believes this breakthrough could act as a stepping stone towards a more powerful speech prosthetic system in the future.

UCSF neurosurgery professor Eddie Chang, who led the study, said: “Currently, patients with speech loss due to paralysis are limited to spelling words out very slowly using residual eye movements or muscle twitches to control a computer interface. But in many cases, information needed to produce fluent speech is still there in their brains. We just need the technology to allow them to express it.”

The Facebook-funded research was made possible due to three volunteers from the UCSF Epilepsy Center, who were already scheduled to have neurosurgery for their condition.

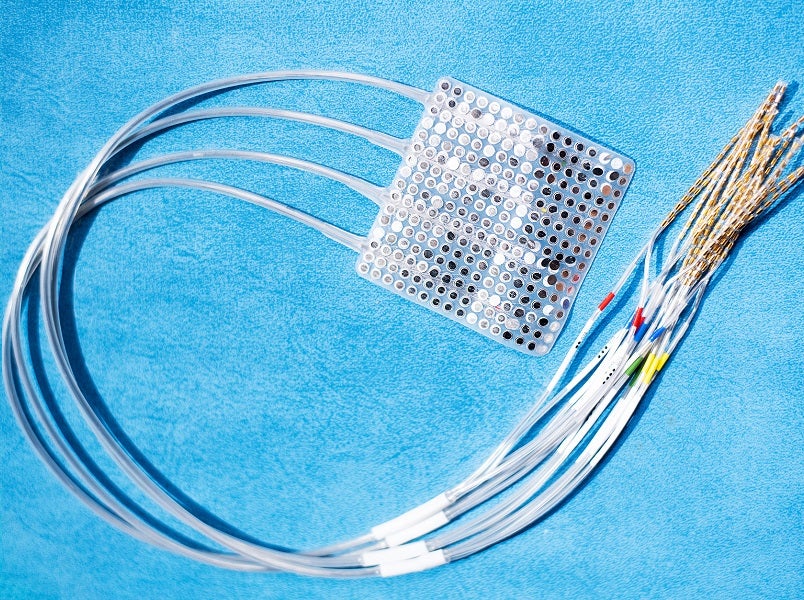

The patients, all of whom have regular capacity for speech, had a small patch of recording electrodes placed on the surface of their brains ahead of their surgeries to track the origins of their seizures. Known as electrocorticography (ECoG), this technique provides much richer and more detailed data about brain activity than non-invasive technologies like electroencephalogram or functional magnetic resonance imaging scans.

The patients’ brain activity and speech were recorded while they were asked a set of nine questions, to which they responded from a list of 24 pre-determined responses. The research team then fed the data from the electrodes and audio recordings into a machine learning algorithm capable of pairing specific speech sounds with the corresponding brain activity.

The algorithm was able to identify the questions patients heard with 76% accuracy, and the responses they gave with 61% accuracy.

Chang said: “Most previous approaches have focused on decoding speech alone, but here we show the value of decoding both sides of a conversation – both the questions someone hears and what they say in response.

“This reinforces our intuition that speech is not something that occurs in a vacuum and that any attempt to decode what patients with speech impairments are trying to say will be improved by taking into account the full context in which they are trying to communicate.”

The researchers are now seeking to improve the software so it is able to translate more varied speech signals. They are also looking for a way to make the technology accessible to patients with non-paralysis-related speech loss whose brains do not send speech signals to their vocal system.