Machine learning techniques, including natural language processing algorithms, have been used to identify clinical concepts in the complex text of radiologist reports on CT scans.

Researchers from the Icahn School of Medicine at Mount Sinai, New York, conducted a study to test the future of artificial intelligence in aiding medical professionals. They believe this technology is an important first step towards developing AI that can interpret scans and diagnose conditions.

Discover B2B Marketing That Performs

Combine business intelligence and editorial excellence to reach engaged professionals across 36 leading media platforms.

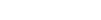

Artificially intelligent computer vision is becoming increasingly common in everyday life. The researchers behind this study theorised that it could be used to help radiologists interpret X-rays and images from machinery such as computed tomography (CT) and magnetic resonance imaging (MRI) scanners.

Eric Oermann, instructor in the Department of Neurosurgery and senior author of the study, said: “The language used in radiology has a natural structure, which makes it amenable to machine learning. Machine learning models built upon massive radiological text datasets can facilitate the training of future artificial intelligence-based systems for analysing radiological images.”

The study focused on programming the technology to understand the difference between normal and abnormal findings in reports written by radiologists. The researchers did this by creating a series of algorithms to teach the computer groups of phrases. These phrases included key terminology such as phospholipid, heartburn and colonoscopy.

In total, the study used 96,303 radiologist reports regarding CT head scans which were performed at The Mount Sinai Hospital and Mount Sinai Queens between 2010 and 2016. As the technique provided a 91% accuracy, the researchers successfully demonstrated that AI can automatically identify concepts in text from the complex domain of radiology.

Medical student and first author of the study, John Zech, said: “The ultimate goal is to create algorithms that help doctors accurately diagnose patients. Deep learning has many potential applications in radiology—triaging to identify studies that require immediate evaluation, flagging abnormal parts of cross-sectional imaging for further review, characterizing masses concerning for malignancy—and those applications will require many labelled training examples.”

There is growing interest in AI that can read and interpret medical data. Last year Oxford-based company Brainomix launched its own imaging software which uses artificial intelligence to identify and interpret brain damage in stroke victims from CT scans.