A terrorist attack in Paris. The Covid-19 pandemic. A drone strike in Ukraine. An earthquake in Haiti. An explosion in Afghanistan. 9/11. These situations have something in common: massive casualties, limited medical resources, and the need to save as many lives as possible as quickly as possible.

For that, medical professionals have developed triage. This process takes its name from the French word trier, meaning to sort, and consists of grouping patients into categories based on urgency. But the system is flawed, hindered by human judgment, and often results in tragic consequences. Can AI improve it?

Medical triage is in a crisis, with controversies emerging worldwide regarding methodology and lack of transparency, often leading to inaccurate or incomplete diagnoses and fatal medical errors. Medical personnel are barely to blame, with emergency rooms (ERs) and intensive care units (ICUs) often being overcrowded and many not having fully recovered from the pandemic. With only a few seconds to dedicate to each patient, medical staff can easily miss a critical element. Triage duties are often offloaded to nurses instead of doctors in understaffed hospitals, and studies have found that a lack of sufficient training is one of the main causes of errors.

There is also a need for international standardisation in medical triage, with each country or state having different procedures. This makes co-operation between medical teams very difficult, if not impossible — especially amid humanitarian crises or war. Having a standardised, World Health Organisation-approved AI algorithm to determine and prioritise medical emergencies is a potential solution to this problem.

Should AI be responsible for human lives?

Triage is a huge moral burden for medical personnel, as an error in judgment could lead to multiple lives being lost in a crisis. “Most especially must I tread with care in matters of life and death”, states the Hippocratic oath, meaning that doctors have a moral duty to help people to the best of their ability. But AI will never have this compassion. It can be programmed for that purpose, but it will never have true morality. Regardless of its success rate, taking away human responsibility will change how triage is considered. If a mistake happens, there is no human to blame, only an impersonal AI.

Naturally, there is apprehension regarding decisions taken by AI. For example, self-driving cars have considerably fewer accidents than human drivers but are still widely regarded as dangerous and accident-prone. Human psychology accepts human errors but has higher standards for machines, which are expected to be infallible. Therefore, when an accident happens because of an AI mistake, it receives more attention and criticism. The same applies to AI-powered medical triage.

A solution to this could be not to fully integrate AI in the healthcare environment and to use it only in an advisory capacity, with humans still calling the shots. This is more realistic from a technical, technological and moral viewpoint. However, studies have suggested that humans are not sufficiently aware of AI biases in decision-making and therefore have a very limited utility in double-checking decisions made by a machine. Humans tend to trust algorithms once they have proven their efficiency and lose critical consideration for what they do.

Substituting human bias for machine bias

One of the key factors in triage-induced medical errors is, first and foremost, emotional. How can a compassionate individual reasonably maintain impartial judgment when faced with the tragedy of death, pain and suffering? Medical professionals are trained to compartmentalise but will always be affected to a certain extent, whereas an AI will never face that issue. Then again, maybe emotional bias, part of human nature, helps people make good medical decisions.

AI will also face biases. Algorithms will be trained on existing ER data and perpetuate human biases. If a hospital has had racist, misogynistic or otherwise discriminatory triage professionals, these trends will be carried onto the AI and will unfairly affect patients. AI might also develop its own biases, as it will most likely attempt to achieve an optimum survival rate, regardless of the manner. If it considers men to have a higher survivability rate based on data, it may decide to deprioritise women. Such trends have, for instance, been observed in Amazon’s never-used prototype recruitment AI algorithm, which strongly penalised women’s resumes based on the company’s historical hiring data.

Evaluation

AI-assisted medical triage is already in use at the John Hopkins Hospital in Maryland, US, through the TriageGO system. There, it reduced the proportion of patients unnecessarily assigned to high-acuity status by more than 50%, saving valuable time and ensuring more acute patients were treated more quickly. Other benefits include improved patient flows and consistency and reduced wait times and costs. Objective third-party, in-depth studies remain to be conducted on the product, however. Other research models, trained on data from Korean hospitals and retrospectively categorising patients and comparing results to decisions made by real medical professionals, have shown an accuracy of up to 95%, promising favourable results for future implementation.

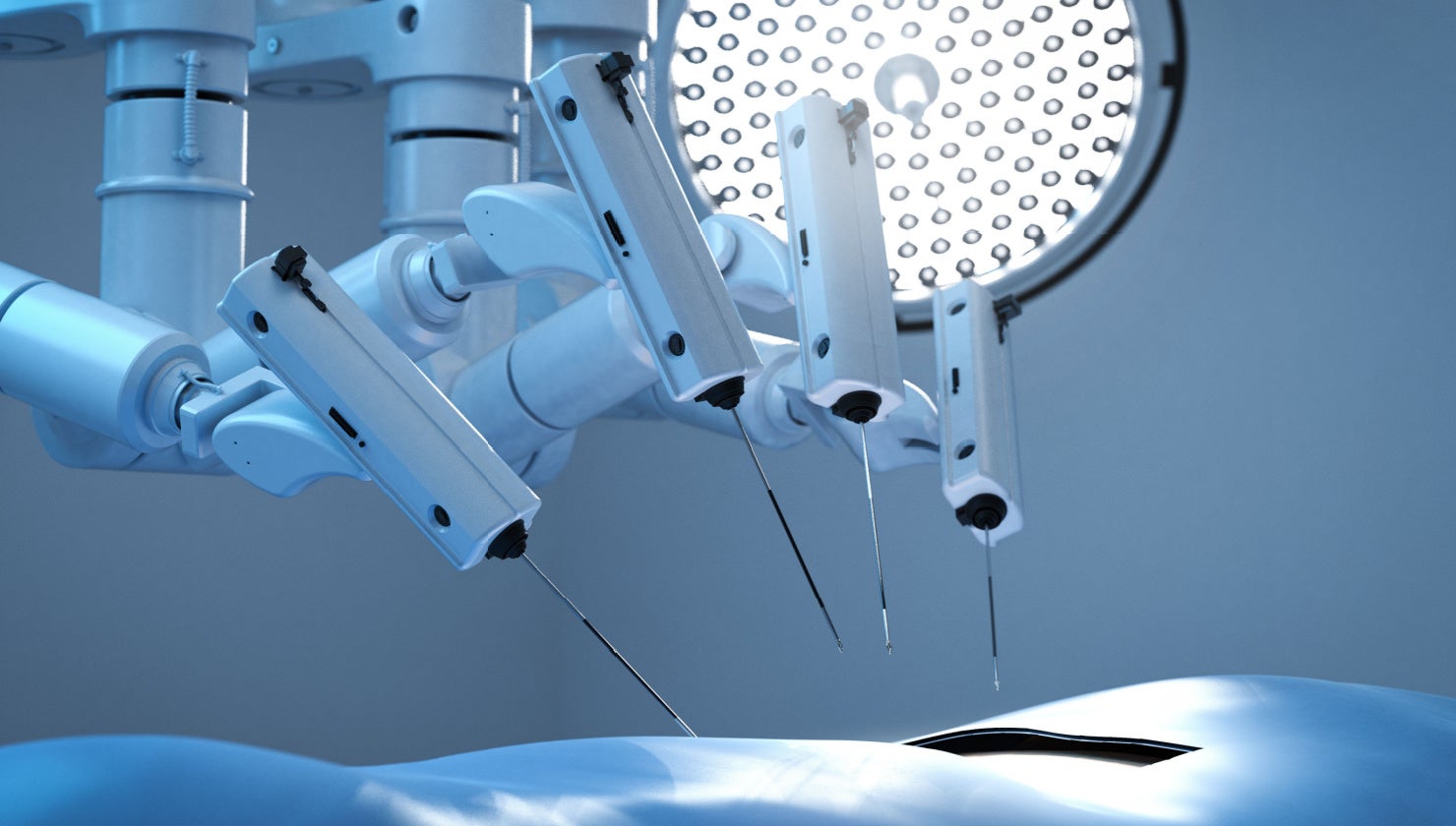

AI has the potential to adapt in real time to available resources if connected to other smart medical devices and will adjust triage criteria more optimally than a human ever could in these conditions. Nevertheless, we are unlikely to see widespread AI-powered triage in public hospitals anytime soon, primarily due to ethical considerations. Due to more readily available funding and fewer moral issues, more complete uses could emerge in the military, both in war situations and in military hospitals. AI triage could also be used to assist dispatchers in emergency call centers to allocate resources more efficiently and reduce the burden on ERs and ICUs. In the future, AI triage could become integrated into a wider IoT [internet of things] healthcare environment, using personal smart sensors to analyse vitals in real time and assess urgency even before medical personnel arrives on the scene. Like many other uses of AI, holistic regulation is urgently needed. The technology will work, but will humanity want it? Something uncanny remains about AI making life-or-death decisions about human lives.